What makes famous math problems hard (and why you should still work on them)

In my career as a research mathematician, I've always enjoyed working on "ordinary" research problems - the sort of problems that advance the state of knowledge in my field, are interesting to think about, occasionally lead to genuinely beautiful new ideas, and have a good chance of leading to published papers and other hallmarks of career recognition.

But I've also spent a lot of time - I'm embarrassed to admit how much exactly - thinking about Famous Open Problems (FOPs). These range from problems that are "locally famous" among people working in a specific area of math (critical exponents in percolation theory, the connective constant of the square lattice), problems that are "moderately famous" and enjoy relatively widespread recognition across all of mathematics (the sphere packing problem, the moving sofa problem, the Collatz conjecture, irrationality of odd zeta values), to universally famous problems such as P vs NP, or the FOPpiest of all FOPs, the Riemann hypothesis.

Anyone who has worked seriously on even non-famous open problems can tell you: they are hard! It's true; mathematics research is generally hard. I have tried and failed to solve many problems of even very humble pedigree that only few people will even care about if I were to solve them. Which may make you wonder: if even that is hard, surely spending significant time attacking real FOPs that so many other people have already tried and (so far) failed to solve is a waste of time and a recipe for frustration, potential career failure, and a possible spiral into insanity - right?

Well, yes and no. I want to offer here my perspective on what it is exactly that makes famous open problems so hard, what we mean when we say a problem is hard, and what are some good reasons why it may be a good idea for a researcher to think seriously about them after all.

What makes a problem "hard"?

Mathematicians like to talk about whether an open problem is hard or easy. To be successful in mathematics research you have to be able to develop an intuitive feel for how hard something is. Aim too low and you'll be consigned to publishing a lot of mediocre work. Aim too high and you'll publish nothing. As a mentor to PhD students you also have to know whether the problems you're suggesting to your PhD students are of a reasonable level of difficulty. Aim too high and they'll flunk out or end up switching advisors. Aim too low and they'll... probably still graduate, but maybe produce work they're less proud of than they might have been otherwise.

But what do we mean when we say that a problem is hard? I think there are different metrics one can apply, and they overlap partially but do not exactly coincide:

A problem is deemed hard if a lot of people tried and failed to solve it. I call this "sociological hardness". This criterion is often applied by journals trying to decide if the work you're submitting to them is important enough to publish. It's relatively easy to apply (because it's usually easy to estimate how many people have worked on a problem by the size of the literature on it), but it's also at some level a lazy criterion. Certainly there are many insanely hard problems that few people have even made a serious attempt at solving, either because they are not that fundamental or interesting, or because for one reason or another the problem has not received much attention and does not loom large in our collective mathematical consciousness.

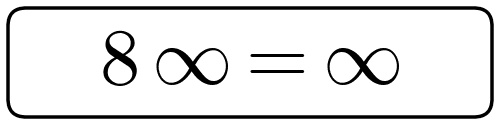

A problem is hard if it requires genuinely novel ideas: it does not readily yield to the existing toolkit of techniques and ideas currently in our possession. I call this "conceptual hardness". Sometimes the conceptual hardness is of such a high level that no one even has a good idea of where to start looking for the right tools. (With the Riemann hypothesis I feel like that is the case, for example.)

A problem is hard if it can be solved using ideas that are roughly in the ballpark of what is known, but in a way that requires a very large amount of effort, time, and persistence. Some well-known breakthroughs in mathematics, like the proof of the four color theorem and Kepler's conjecture, can be seen as cracking through that final barrier of difficulty by putting together ideas and methods that were largely known at the time (and expected to work as a way of arriving at a proof) but in a way that required a tremendous amount of patience and effort, and some extra ingenuity. These are remarkable achievements, but I feel like they belong in a different category from breakthroughs that were achieved by breaking through a conceptual hardness barrier.

Why I like to think about famous open problems

One reason that isn't why I like to think about famous open problems is "because I believe I might solve them". To think about a FOP for that reason would be crazy. But there are nonetheless other good reasons to do it. Here are the main ones for me:

Because it's fun. Thinking about FOPs is typically more exciting than thinking about "ordinary" research problems. These problems are famous for a reason - they capture our imaginations and are genuinely very fun (sometimes addictively so) to think about.

Because you learn from firsthand experience why the problem is hard and why it is important. Steve Jobs famously said "Don't be trapped by dogma - which is living with the results of other people's thinking." He was talking about a different context, but this applies to math research as much as to anything else. Our ideas of what problems are hard or important are often handed down to us by our seniors, and it takes time and patience to develop your own independent point of view. I used to believe that the Riemann hypothesis is important because I was told it was (usually with some pop-sci watered down explanation of why that was the case). It took me many years of study and thinking to arrive at my own personal set of beliefs about both the problem's hardness and its importance. These beliefs are a lot more nuanced than the watered down explanations I had read previously. In a sense, I feel I have a deep familiarity with and appreciation for the problem. That is quite satisfying - perhaps in a way almost as satisfying as it would be to know the actual solution to the problem.

Because non-famous open problems are also hard. I mentioned above that research is generally hard, and that I failed to solve many of the non-famous open problems I tried working on, where the reward for solving them wouldn't be that great anyway. Well, if you're going to fail at something, wouldn't you rather fail at doing something super-exciting and ambitious than failing to do something that almost no one even cares about?

Of course, I'm being (only slightly) facetious here. Obviously you shouldn't just be making choices about what to fail at! A level-headed person who wants to have a successful career and to contribute something meaningful to the world should naturally also plan to at least occasionally not fail. And for the not failing category, it's almost always better to work on open problems that are not famous. But I feel the point in the preceding paragraph is still a valid one, and suggests that, for the proportion of your research time that you allocate to doing things that will likely fail, you should occasionally GO BIG and work on FOPs.

Because it leads to new research. One quality that famous open problems tend to have is that they stand at the confluence of a lot of exciting ideas and research topics, rather than being isolated questions that don't relate to anything else mathematicians care about. (One possible exception to this that I've had experience with is the moving sofa problem; while it does relate to other interesting things, those connections are a bit tenuous, and in fact advances on the moving sofa problem such as its remarkable solution by Jineon Baek in 2024 do not immediately translate to advances in other research areas. But I predict that will actually change over time, and his ingenious ideas will find surprising applications elsewhere.) For this reason, working on FOPs often results in making new discoveries that aren't as spectacular as solving the open problem you set out to solve, but are interesting in their own right. Many of my "ordinary" research papers came out of research that was motivated by working on problems that were a lot more difficult than the problem I ended up solving.

Because it makes me smarter. Trying and failing to solve open problems (famous, or not) forces you to think very hard about a topic and explore different angles for attack. Usually in the process you learn a lot: about the problem itself, about what's been tried by other people, about connections to other fields, and much more. The knowledge you pick up may serve you well down the road when you do "ordinary" research, and produce tangible benefits you would not necessarily have expected. In other words, "failing" in a local sense can still lead to real successes later on.

Because I can. One of the joys of being in academia is the freedom you have to pursue your own interests and to work on whatever you are passionate about. One should not take this mindset to an unhealthy extreme: even having tenure does not mean you should spend 100% of your time on research that is almost guaranteed to fail. I mean, technically you can and you'd still have a job, but having a job is a low bar to clear, and researchers who get tenure have been selected to not be the kinds of people who would be content to never publish anything again after getting tenure - that is precisely why giving such people tenure was a good idea in the first place.

Because I feel compelled to. I was thinking about the Riemann hypothesis and other FOPs long before I had tenure. So the "because I can" tidbit doesn't tell the full story. In other words, thinking about FOPs may not necessarily be a rational decision, but may in fact be more of an unhealthy obsession. Would my career actually be more successful if I didn't spend as much time as I did working on FOPs? That's a real possibility.

Why you should think about famous open problems

I'm really bad at telling people what they should or should not do. What worked for me may or may not work for you, so I never feel like I can authoritatively give people advice even if it's based on my own experience. All I can say with confidence is: working seriously on famous open problems is not the waste of time that it may appear to be at a superficial glance. It's not a waste of time at all in my opinion, at least in the same sense that pursuing any passion or hobby is not a waste of time. As I hope my analysis above makes clear, there are solidly good reasons to do it. And there are some good reasons not to do it, and excellent reasons not to overdo it. And with that very confident verdict (that I'm sure makes me an excellent candidate to run for president), I will rest my case.